Tue May 27 00:00:00 UTC 2025: Here’s a summary and a news article based on the provided text:

**Summary:**

AI is rapidly transforming satellites from passive tools into active, autonomous machines capable of decision-making and independent action in space. This transformation presents both opportunities (automated operations, self-repair, targeted intelligence) and significant challenges. The existing legal frameworks governing space activities, particularly the Outer Space Treaty of 1967, are inadequate to address the complexities of AI-driven satellite autonomy. Key issues include liability in case of AI malfunction, potential for misinterpretation and escalation in geopolitically sensitive contexts, and the need for ethical data governance. The authors argue for urgent international collaboration to develop new governance frameworks, similar to those in aviation and maritime, to ensure space safety and prevent an arms race in space, balancing innovation with precaution and shared stewardship.

**News Article:**

**AI-Powered Satellites Spark Legal and Geopolitical Concerns, Experts Warn**

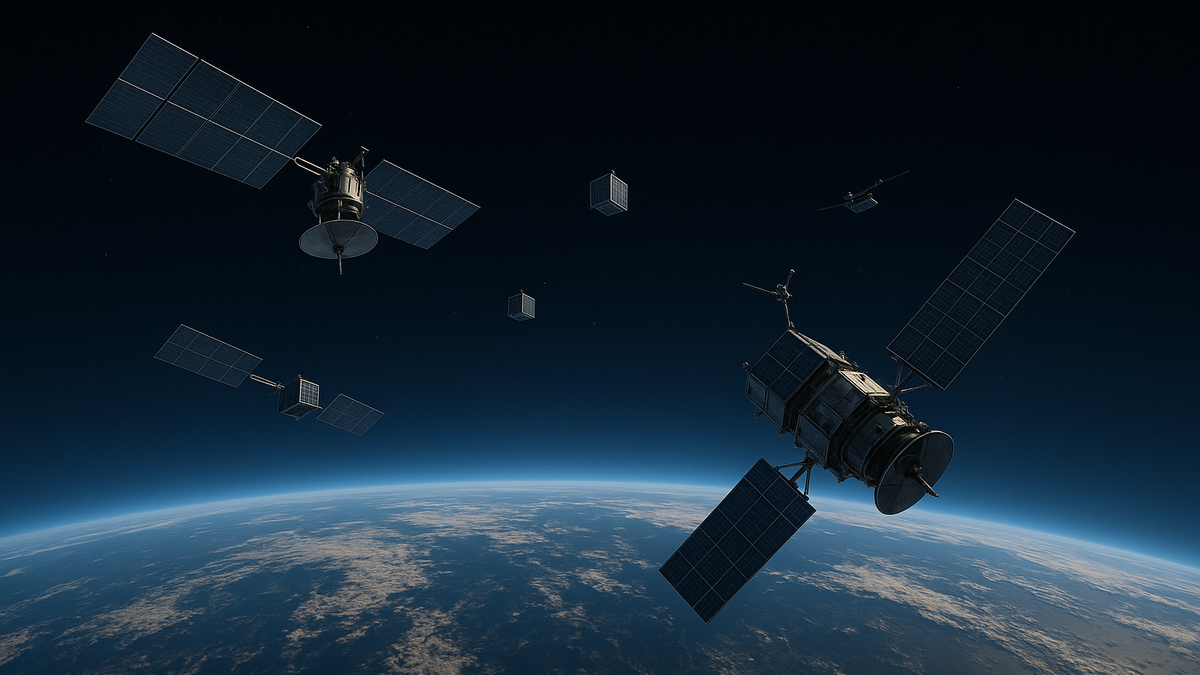

**New Delhi, May 27, 2025** – The increasing use of artificial intelligence in satellites is revolutionizing space operations but also raising critical legal and geopolitical questions, according to a report published today. Satellites, once passive data collectors, are now evolving into autonomous systems capable of independent maneuvering, self-diagnosis, and even targeted intelligence gathering.

However, the rise of AI-powered satellites poses significant challenges to existing international space law. The current legal framework, primarily based on the 1967 Outer Space Treaty, assumes human control over space activities, leaving a “human-AI gap” in accountability.

“The core legal dilemma is fault attribution: who is liable when an AI’s decision causes a collision: the launching state, the operator, the developer, or the AI?,” ask Shrawani Shagun, a PhD candidate at National Law University, Delhi, and Leo Pauly, founder and CEO of Plasma Orbital, in their analysis.

Furthermore, the dual-use nature of AI, with both civilian and military applications, creates the potential for misinterpretation and escalation in already tense geopolitical situations. An AI malfunction or misjudgment could trigger a diplomatic crisis or even military conflict.

Experts are calling for urgent international collaboration to develop new governance frameworks. These frameworks should categorize levels of satellite autonomy, enshrine meaningful human control, and establish global certification procedures to ensure the safety and reliability of AI-powered systems. Models from the aviation and maritime sectors, where strict liability and pooled insurance are used to manage risk, could inform the development of space law.

The authors urge global safeguards to prevent an arms race in space. With thousands of autonomous satellites projected to operate in low-earth orbit by 2030, the risk of collisions, interference, and geopolitical misinterpretations is rapidly increasing.

“We are entering an era where the orbits above us are not just physical domains but algorithmically governed decision spaces,” the authors conclude. “The central challenge is not merely our ability to build intelligent autonomous satellites but our capacity to develop equally intelligent laws and policies to govern their use.”